Indian Government Breach, Disclosure

Last month, Sakura Samurai announced that we had uncovered a massive amount of critical vulnerabilities. Obviously, to prevent threat actors from exploiting the vulnerabilities, we ensured that we redacted the specific issues and only gave a brief overview on what we had discovered. After working with the NSCS, we have been given the green-light to disclose more specific details and all 34-pages of our reported vulnerabilities have been adequately remediated.

Credits

All of the following hackers had a specific role to play during the research process. We ask for proper attribution in any media coverage.

Sakura Samurai Members

Jackson Henry

Robert Willis

Aubrey Cottle

John

Collaborators

Zultan Holder

Impact

In reality the impact was beyond critical and could have allowed us to perform further exploitation. With the level of access that we had obtained, we could have set up crypto miners, dropped ransomware, stole a wide variety of data, maintained persistence and pivoted throughout the network, etc. The possibilities were limitless. We had access not only to the Indian Government, but to a wide array of state entities that will be listed in the technical details.

Resolution

As we stated in our previous writeup, getting disclosure and mitigating the vulnerabilities was a challenging effort, as we had no indication of expectations or response to the specifics of our report. We were able to receive assistance from the DC3 - but yet, still had to escalate initially via Twitter. NSCS top leadership ultimately helped us mitigate the vulnerabilities, and in an unprecedented move - they resolved nearly all 34 pages worth of findings. Based on what Sakura Samurai had heard from researchers previously, this wasn’t something that had been done before. We noted a large community response of general frustration about unresolved reported issues. However, in conjunction with government entities, we were able to ensure the safety of many citizens and the protection of intellectual property, PII, financial data, and government-functionality. Sakura Samurai commends the NSCS and leadership for assisting in this resolution.

Enumeration & Exposed PII

First and foremost, if you haven’t read our initial writeup, please do so to understand the context of some of the processes. Some aspects will be similar, and draw inspiration from our previous redacted writeup.

The research process began when Jackson Henry, Zultan Holder, and Robert Willis started performing reconnaissance via the NCIIPC RVDP [Responsible Vulnerability Disclosure Program]. First, Jackson Henry and Zultan Holder began identifying subdomains using Chaos and SubFinder. After all the subdomains were identified, Jackson Henry and Zultan Holder began to find exposed /.git directories, containing credentials for applications, servers, etc.

Simultaneously, through further subdomain enumeration with amass and fuzzing by utilizing dirsearch, Robert Willis discovered a subdomain on the Satara Police Department’s website, with an exposed /files/ directory. Inside of the directory, Willis found heaps of sensitive police reports and even some forensic data. The inadvertent exposure put many of the individuals that had trusted the Satara Police Department at risk. Uploading sensitive documents shouldn’t be done, especially in the instance of an organization having no idea how to configure the web application correctly.

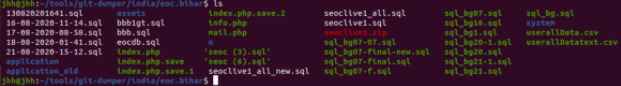

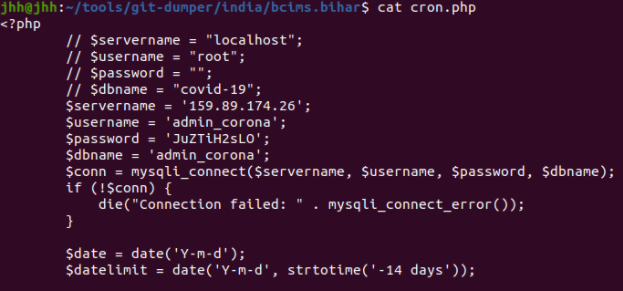

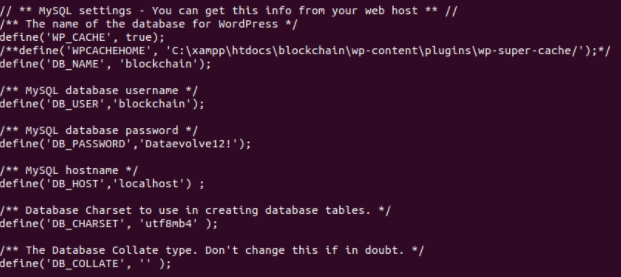

Shortly after, Jackson Henry - along with Robert Willis, Aubrey Cottle, and John Jackson began more subdomain enumeration with amass, web application fuzzing with dirsearch, port scans on various servers with NMAP/Rustscan and low-hanging fruit vulnerability identification utilizing Nuclei and the acquisition of exposed .git directories with git-dumper. Within hours, Sakura Samurai had identified 10 exposed .git directories. The various projects exposed in these directories had hard-coded credentials for multiple servers and applications, including MYSQL, SMTP, PHPMailer, and Wordpress.

List of Organizations with Exposed .git directories:

Government of Bihar

Government of Tamil Nadu

Government of Kerala

Telangana State

Maharashtra Housing and Development Authority

Jharkhand Police Department

Punjab Agro Industries Corporation Limited

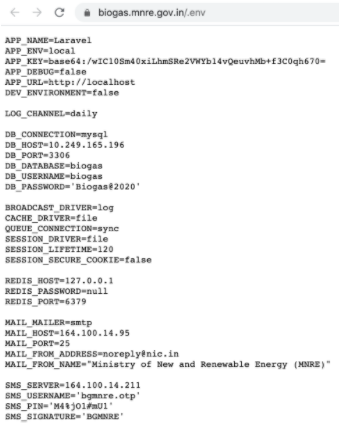

Using the noted tools, Sakura Samurai then discovered .env files, exposing even more credentials on the client-side. Most of the exposed client-side .env files included credentials for SMTP, MYSQL, REDIS, SMS Services, and Google Recaptcha keys and secrets. There were 23 separate-exposed .env files.

List of Organizations with Exposed .env files:

Government of India, Ministry of Women and Child Development

Government of West Bengal, West Bengal SC ST & OBC Development and Finance Corp.

Government of Delhi, Department of Power GNCTD

Government of India, Ministry of New and Renewable Energy

Government of India, Department of Administrative Reforms & Public Grievances

Government of Kerala, Office of the Commissioner for Entrance Examinations

Government of Kerala, Stationery Department

Government of Kerala, Chemical Laboratory Management System

Government of Punjab, National Health Mission

Government of Odisha, Office of the State Commissioner for Persons with Disabilities

Government of Mizoram, State Portal

Embassy of India, Bangkok, Thailand

Embassy of India, Tehran

Consulate General of India

There aren’t 23 entities here, and that’s because some of the State entities had instances of .env exposure for multiple applications.

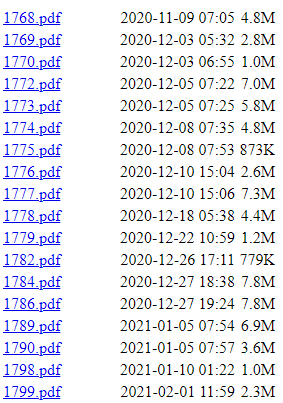

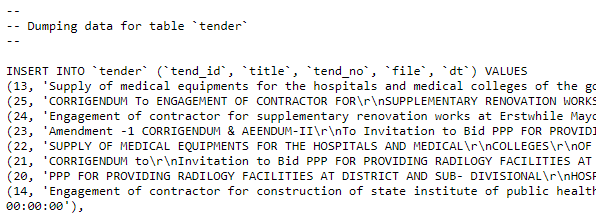

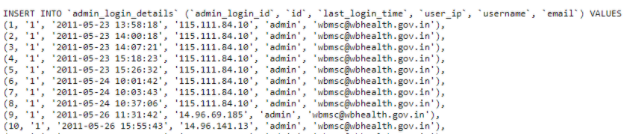

Continuing on, an instance of a client-side exposed SQL Dump was found on the Government of West Bengal’s Medical Services Corporation Application.

Unfortunately, due to the nature of the vulnerability we can not show the PII, but the SQL Dump contained anyone that had utilized WBMSC’s “Contact Us” form, revealing any of their inquiries, along with IDs, Names, Emails, Addresses and Contact Numbers.

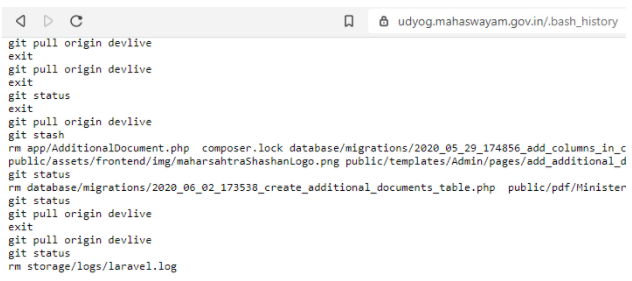

Three instances of exposed client-side .bash_history files were discovered, exposing sensitive data. Two out of the three files exposed passwords for the local databases.

List of Organizations with Exposed .bash_history files

Government of Kerala

Government of Maharashtra

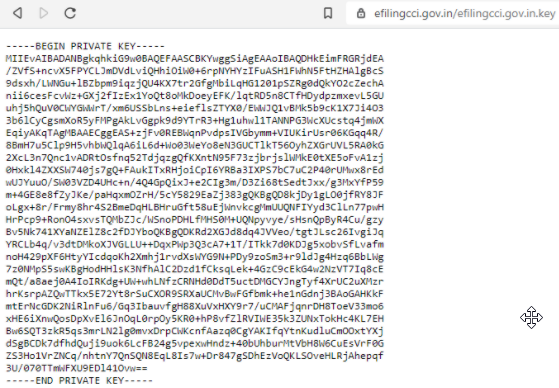

Moving on, our research presented multiple entities with RSA Private Keys exposed on the client side.

In total, we observed 5 exposed instances of private keys.

List of Organizations with Exposed Private Keys

Government of Kerala, Service and Payroll Administrative Repository

Government of West Bengal, Directorate of Pension, Provident Fund & Group Insurance

Government of India, Competition Commission of India

Government of Chennai, The Greater Chennai Corporation

Government of Goa, Captain of Ports Department

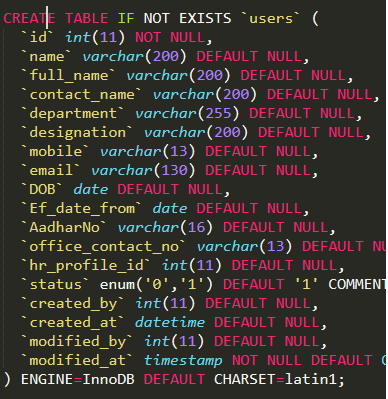

Jackson Henry then dug up another exposed SQL file on the client-side, this time resulting in a dump of 13,000 PII records from the Government of India’s youth employment program, Grameen Kaushalya Yojana or DDU-GKY.

Exposed PII:

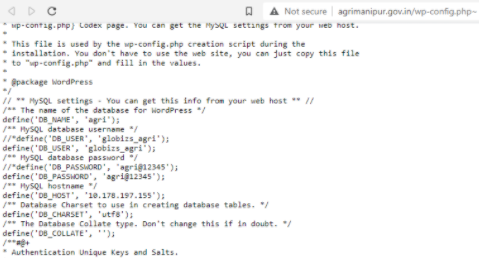

Finally, one additional set of database credentials were discovered via an exposed wp-config.php file on the client-side, affecting the Government of Manipur’s Department of Agriculture.

Exploitation & Privilege Escalation

At this point in time, we already had heaps of vulnerabilities documented. We didn’t need to access most of the Databases or utilize the credentials as it would have been unnecessary. Still, we were confident that any credentials that were restricted to being utilized on the internal subnets of many of the organizations could have been exploited via footholds that were achieved elsewhere with externally-accessible services that we had credentials to.

Nonetheless, we wanted to avoid any unnecessary exposure to PII which was undoubtedly sitting within many of the databases that we had credentials to. We decided to opt for moving from the enumeration phase into testing specific exploitation methodology. During our enumeration and active scanning, multiple vectors of attack were identified.

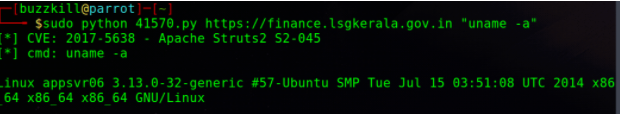

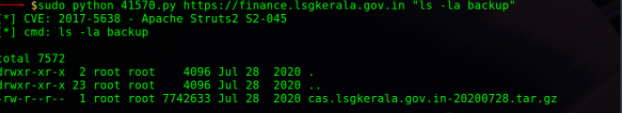

One specifically, was the Government of Kerala’s Local Self Government’s financial server. Through our enumeration methodology, we discovered that the server was running a vulnerable Apache Tomcat server. John Jackson began researching and quickly found that the server was likely vulnerable to CVE-2017-5638, Apache Struts. He downloaded the exploit from exploit database, which if successful, would allow for Remote Code Execution. Jackson then ran the exploit, and observed successful command execution of basic commands.

After a bit of enumeration, Jackson found a “backup” folder that contained a tar archive that contained a backup of application components, which would allow him to exfiltrate financial records. At this point, he stopped testing.

The remote code execution vulnerability could allow him to exfiltrate configuration files or intellectual property, or modify, destroy, and disrupt the accessed server. Obviously, it’s known that with this level of access he could have setup post-exploitation and installed a C2 beacon with Cobalt Strike to pivot to additionally connected network resources.

Post-RCE Host Environment Recon & Exploit Chaining, resulting in total Application User Session Takeover

Deciding to do a deep dive on the system subject to the previously mentioned RCE vulnerability, Aubrey Cottle’s engagement began with some cursory exploration of the filesystems and installed applications. He was extremely curious about this specific environment as it appeared to be the application server for a government-operated financial application. Its true purposes remained unknown, as the contained information was not in English. The basics were easy to surmise, at least. Cottle included additional perspectives and notes from the perspective of a seasoned threat actor, including potential vectors for lateral movement, what he would have done to sidestep existing limitations, and continue to escalate, and exfiltrate at scale.

It is worth noting that there were already some minor efforts at hardening in place, as specific directories were read-only that would have allowed for a much simpler remote shell and toolkit to be employed. Privilege escalation was not attempted, but after assessment, would have been trivial. All actions were performed as the Tomcat process owner, ‘www-data’.

Mass enumeration of all configuration files on the system was done via Linux ‘find’, the nature of the remote shell made for some annoyances in ease of use, so all long-running tasks were invoked as background processes, and output was stored ephemerally within the Linux shared memory filesystem ‘/dev/shm’ to avoid disk writes in the event of any future forensic investigations. Files of interest were then individually inspected to ascertain details of the infrastructure, internal network, active services, additional hosts on the internal network, and config-defined credential pairs. There were enough interesting things on this specific system after an initial pass, but for an adversarial intrusion, he would have immediately begun probing the other hosts discovered, primarily database servers and gateways/firewalls/VPN appliances; the credentials required were readily available in the readable configs.

The local Apache Tomcat server’s configuration contained management credentials, an easy win. The web manager panel was enabled under the default location. If it had been disabled, and Cottle were determined, he had the required account privileges to edit the configuration, and a basic denial-of-service would get someone to take care of the restart on his behalf. Additionally, had the management app been bound to a private, internal address, a simple tunnel or local netcat listener would have solved the problem.

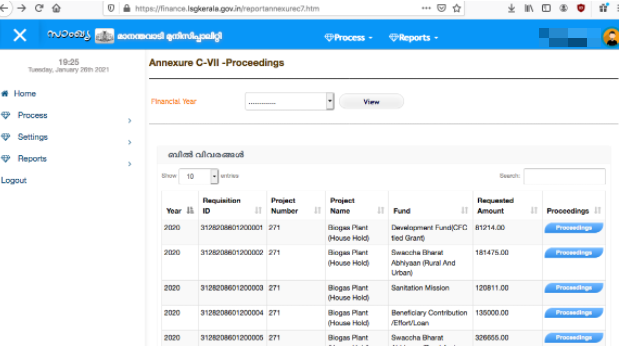

Taking the path of least resistance, the next step was using the Tomcat credentials. There were multiple web applications running on the installation, a few test apps, and the main financial app that was the primary target. By default, Tomcat lists a count and an interactive list of active user sessions [full name, age, job title, industry, account type, and so forth]. If Cottle were patient, he could have scraped the session list until he found an active user with elevated privileges, but in this situation he opted for a random choice, as a proof of concept.

Java web applications typically utilize the session cookie “JSESSIONID”, so a local cookie was created using the randomly selected user’s ID. Upon refreshing, he was still on the login form. Cottle could have inspected the application itself via his shell, but a quick google search with “site:[domain]” led him to a section of the application post-login, and as expected, Cottle was signed in as the user he had randomly chosen.

Full control of the account was achieved, it was deemed a success, and the engagement was wrapped up.

Check out our website

https://sakurasamurai.org

Twitter Links:

Main Page

https://twitter.com/SakuraSamuraii

Founders

https://twitter.com/johnjhacking

https://twitter.com/nicksahler

Members

https://twitter.com/JacksonHHax

https://twitter.com/Kirtaner

https://twitter.com/rej_ex

Collaborator

https://twitter.com/orpheus9001